Elton Artificial Intelligence

The frictionless way to handle expenses

Ulises Siriczman

The challenge

Millions of people travel every day for work. Naturally they have travel expenses. At the end of the day, they end up with a big pile of receipts that they have to manually upload to an application in order to have that money reimbursed to them. This brings two problems for companies: from the employee side, they have to remember to do this or they’ll end up losing money. From the employer side, they have no accurate way to know how much an employee is spending, so they probably are reimbursing them more o less money that they should. Both situations are uncomfortable. My role in this project was being the sole designer in charge of the whole user experience from the flowcharts to the UI design. I worked alongside a team of developers and the Product Owner from the client side.

The solution

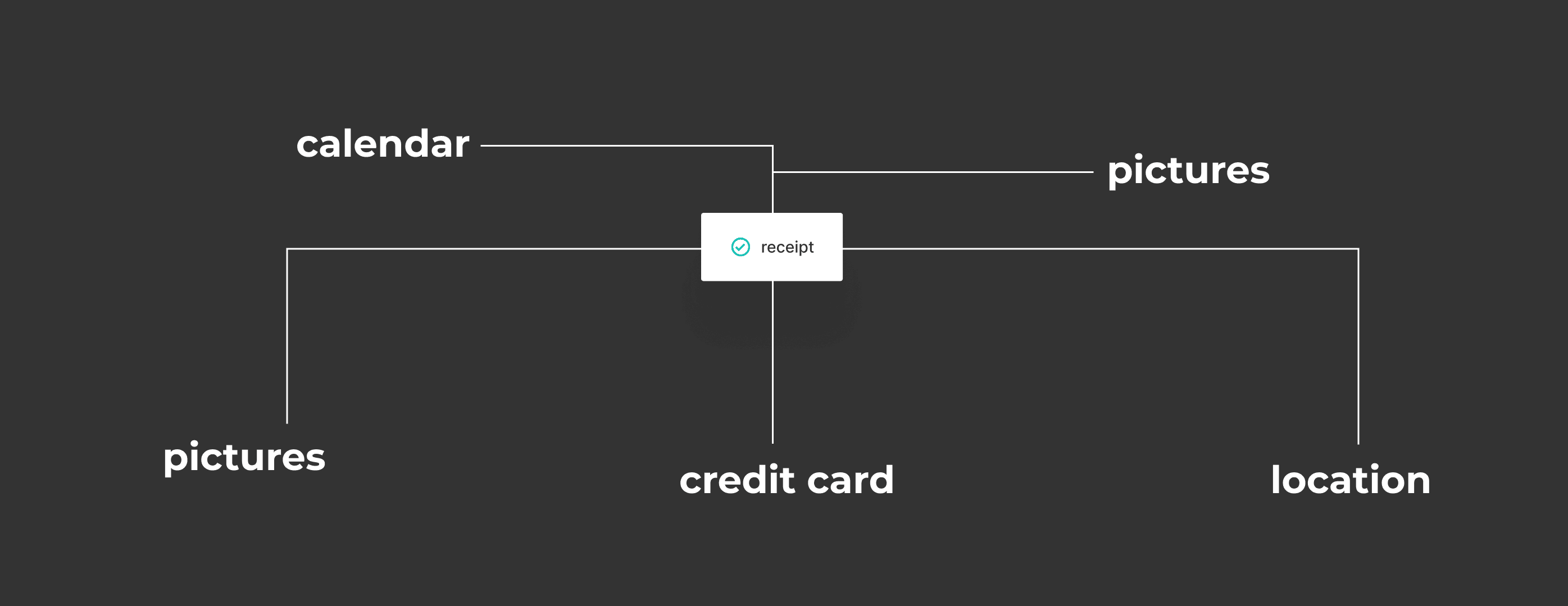

Our strategy was to simplify the busy work so employees can focus on their task at hand. The technology behind the app is a OCR (Optical Character Recognition) system linked to a machine learning engine. This engine uses different sources, like your calendar, your camera and your credit card info to predict with a growing confidence rate if the user has valid receipts and submits them for approval.

receipt-min

Understanding the problem

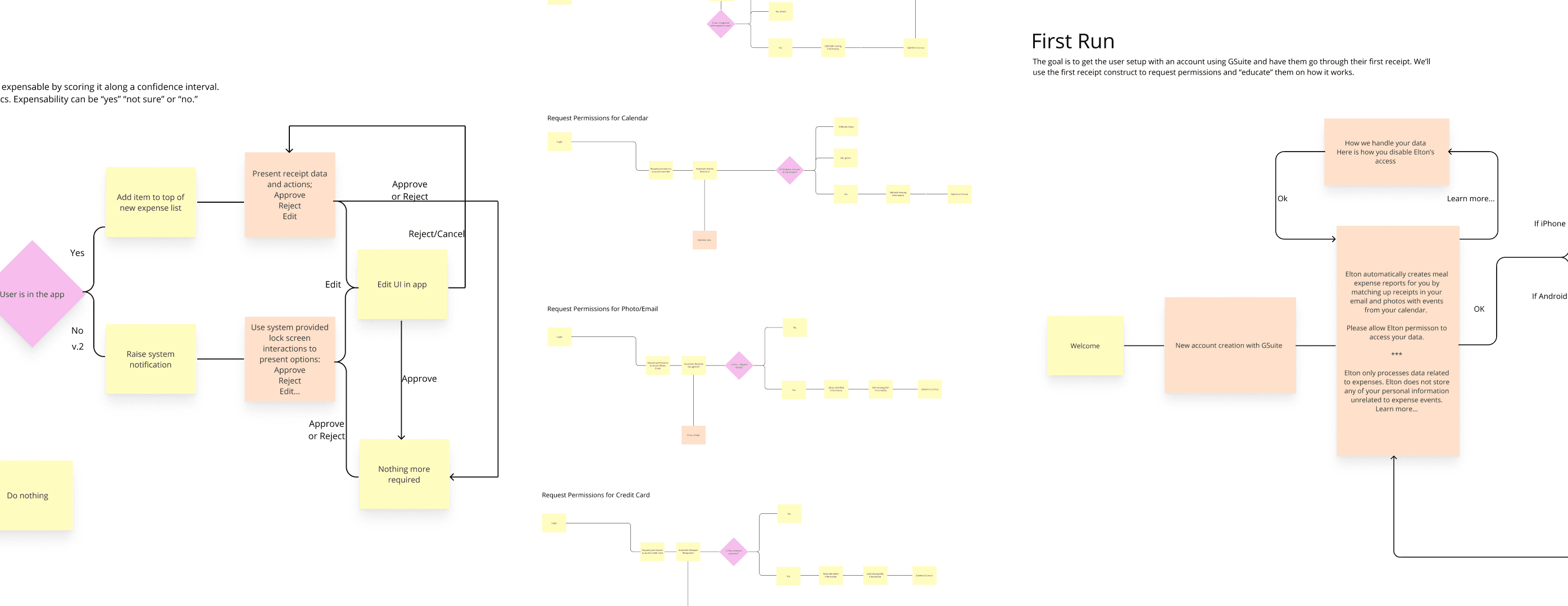

We had several meetings with the client team to learn about their research. Currently users are spending so much time dealing with expenses that they end up dropping the subject altogether. Contrary to most apps, in this case the least time the user spends in the app, the better. The first thing we learned is that for this to work we had to create rapport with the user because we would be asking them to trust us with really sensitive information.

The process

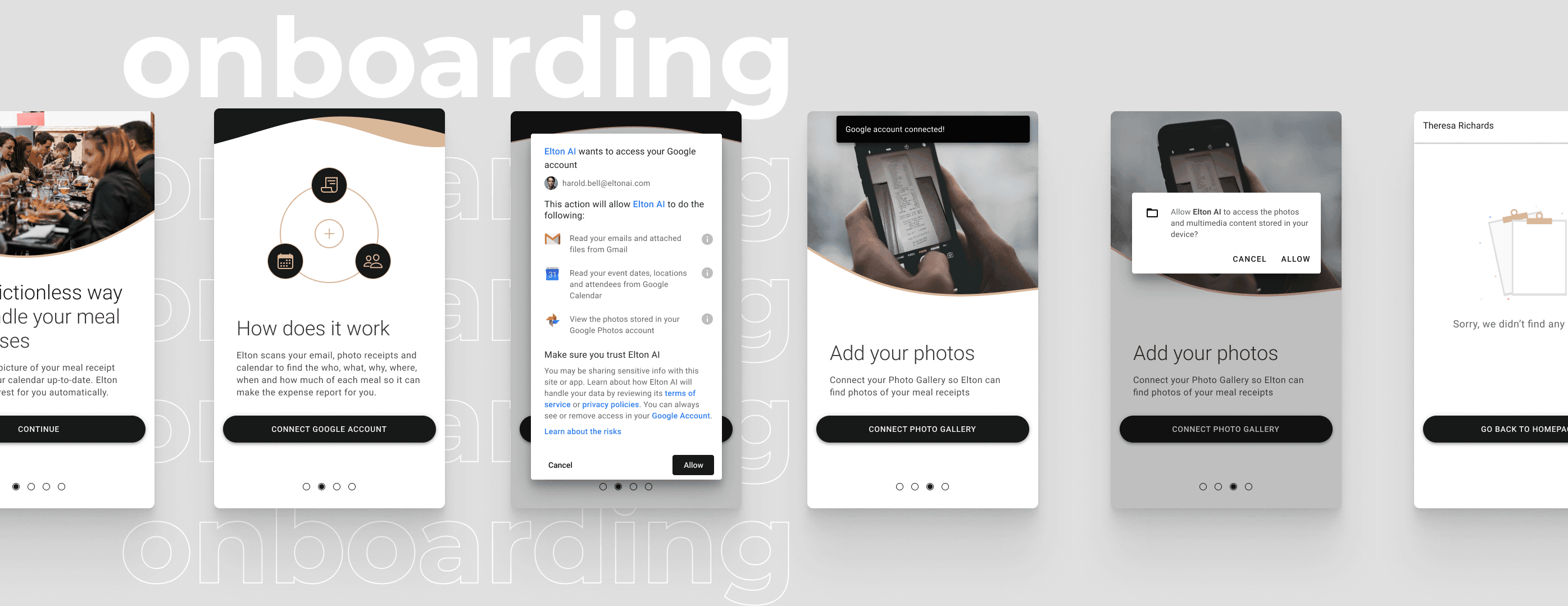

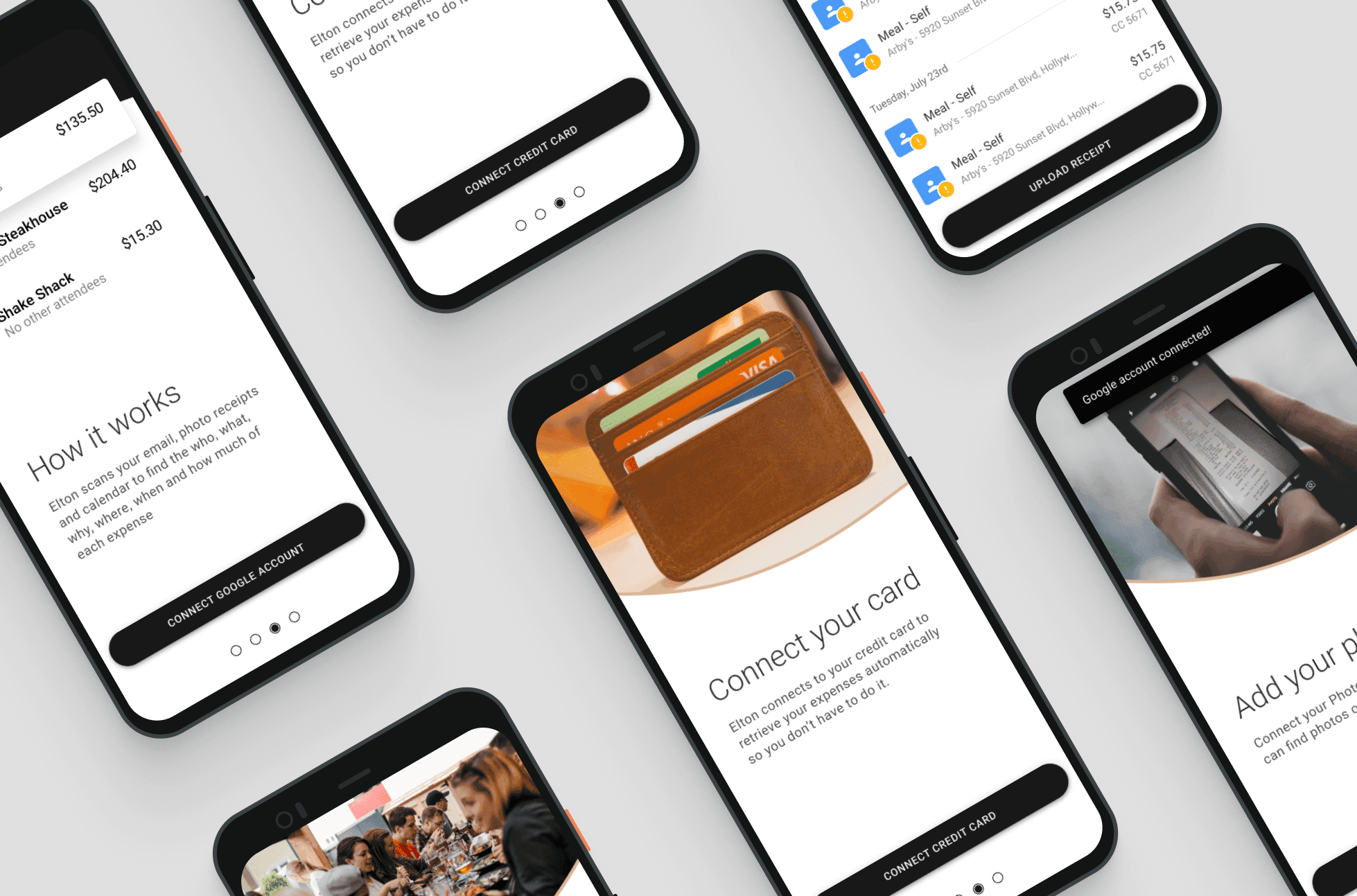

We agreed with the client team that the first step was to work a lot on the onboarding process. We did several iterations fine-tuning, testing, reworking everything from the aestethic to the copywriting to make sure the users trusted the system enough to allow it to access all the permissions necessary. We drafted a lot of possible looks for the app, going from illustration to pictures, and did several tries on the user flow for this stage. On parallel, the Dev team started focusing their efforts on a consistently reliable machine learning model.

whiteboards-min

There are no edge cases

In this case, we had to create an almost bulletproof solution. We started working under the assumption that user might not have added their credit card information, they might have not granted access to their calendar, to their contacts or to their location. After a few interviews we ended up defining that in order to provide a MVP experience we needed at least the credit card info. And it’s the lowest trust threshold, since users are used to enter their credit card info a lot to do online purchases. Since we had time constrains and also had to design both for iOS and Android, I worked on a version that could be easily adapted to both OS without much work.

onboarding-min

The 3 states of a receipt

A receipt can have 3 different states: Ready for review, Submitted or Rejected. A receipt is in the first state when it has been identified as a new purchase. It can contain as much or as little information as the user has authorized so far. If the user decides to update the information, a request for permission access will trigger so we can save this step next time. A submitted receipt is the one that has been identified as a new purchase, has been reviewed by the user and has been submitted for reimbursement. And a rejected receipt is one that has identified as a new purchase, but the user has determined that it’s not valid for reimbursement by their employer.

Getting the info flowing

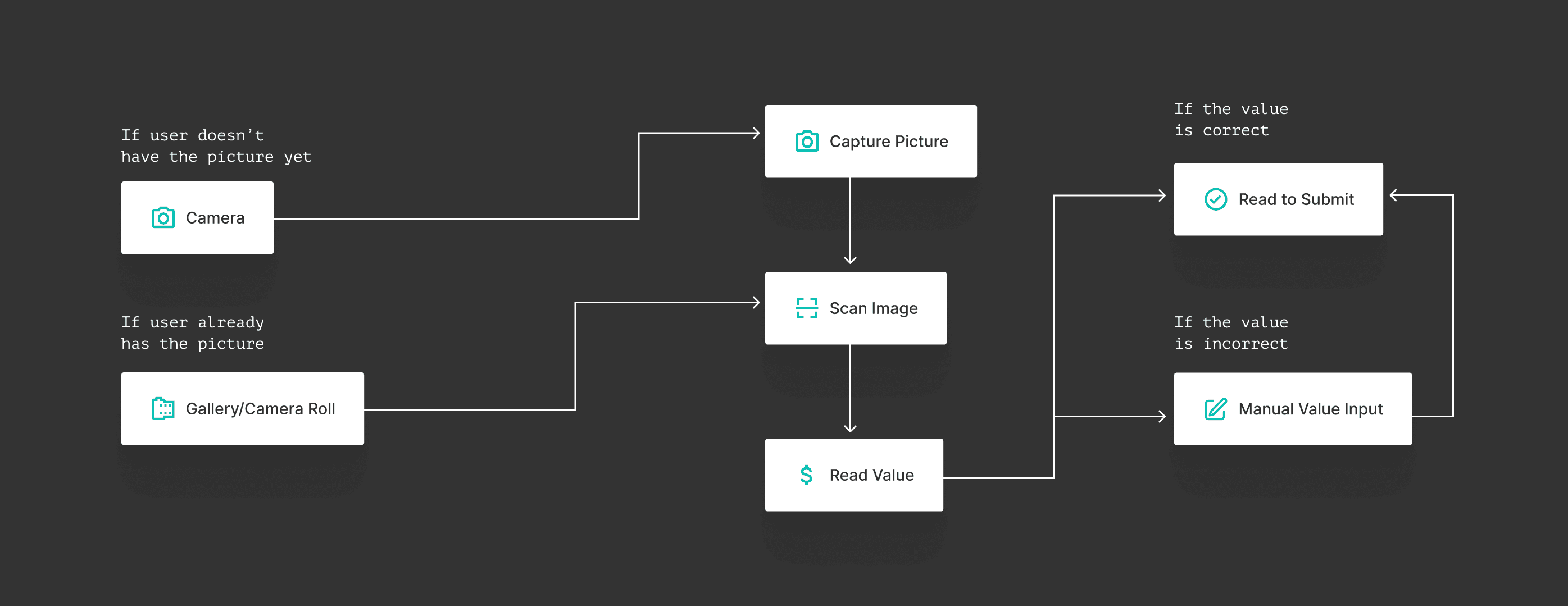

Once the onboarding flow was defined, we began working on the core functionality: uploading receipts for submission. It should be done automatically, that’s the core premise behind the app, but users can always add more information to each receipt in case the auto-pull from the data sources had some errors or they didn’t authorize it in the first place.

The receipt is basically a form with different fields, some of which will be completed automatically and some that are optional for the proper submission. The most critical one was the value on the receipt, because it involved the OCR. If the value wasn’t correct, it required the user’s input.

flowchart-min

The result

This is the end products and its features:

Final1-min-1

Elton A.I.

- Automatically reads receipts using OCR technology

- Complete receipts with info from data sources

- Reject invalid receipts

- Read pictures from your Camera Roll or Gallery

- Edit each field manually

- Review each receipt before submission

- Available for iOS and Android

In conclusion, since we were working under time constrains, we couldn’t focus on fancy UI interactions, but focused our efforts on the onboarding and the functioning machine learning model instead. The final product is an app that allows people on the road to connect different data sources so they can keep up to date with their receipts and allows employees to keep track of their employees expenses.